Architecture:Specification of BABEL Modules

| Previous: Interfaces | Next: RPDs |

Contents

This page contains detailed information about a collection of modules and their role in the control architecture. The complete list of modules available for download is available here.

Layer 1: HAD Modules

All the interfaces are defined in the previous section.

| Module (ICE) name | Description | Implemented Driver Interfaces |

|---|---|---|

| HAD_MobileBase_Pioneer | Driver for the Pioneer3 DX/AT mobile bases, through a COM serial port and the ARIA library. | DRV_ALIASES, DRV_MOBILE_BASE, DRV_IOBOARD, DRV_BATTERY_LEVEL |

| HAD_MobileBase_Simulator | A simulated 2D mobile base. | DRV_ALIASES, DRV_IOBOARD, DRV_MOBILE_BASE, DRV_2D_RANGE_SCANNER |

| HAD_Laser_Sick_USB | Driver for the SICK laser range scanner via RS422-USB board. Hw version is JLBC/APR-05. | DRV_ALIASES, DRV_2D_RANGE_SCANNER |

| HAD_Laser_Hokuyo | Driver for the HOKUYO laser range scanner via a COM serial port. | DRV_ALIASES, DRV_2D_RANGE_SCANNER |

| HAD_GPS | Driver for COM-connected GPSs. | DRV_ALIASES, DRV_GPS |

| HAD_GasSensors | Driver for the custom-built e-Noses (Hardware Feb-2007). | DRV_ALIASES, DRV_GAS_SENSOR |

| HAD_IMU_XSens | Driver for the XSENS IMU. | DRV_ALIASES, DRV_IMU_SENSOR |

| HAD_Generic_Camera | Driver for any OpenCV/FFmpeg/Bumblebee camera (any camera supported by MRPT's CCameraSensor). | DRV_ALIASES, DRV_CAMERA_SENSOR |

Layer 2: Basic Sensory (BS) Modules

Recall that all these modules share a common IF as described in the previous section.

BS_IncrementalEgoMotion

- Description: This module collects data from odometry, and possibly from visual odometry, etc... to provide incremental estimations of the robot pose.

Unlike most other modules which starts with sending events disabled by default, BS_IncrementalEgoMotion will always send an event for each new action available.

This module will read odometry from one of these sources (it will automatically detect which module is up):

- BA_RoboticBase: For 2D wheels odometry.

- BS_Vision: For stereo vision odometry (Not implemented yet!)

IMPORTANT NOTE: If some module is interested in a detailed list of robot pose increments, it MUST listen for events launched by this module and read all of the increments (see table of events).

- Interface:

| BABEL service group | BABEL services to implement | |

|

|

<cpp>

out COMMON::SeqOfBytes act, out boolean error ) </cpp> |

Return the last action as a serialized MRPT object of the class mrpt::slam::CAction. This can be a 2D or 3D incremental movement of the robot. |

BS_Vision

- Description: This module collects images from the available cameras and:

- Provides the raw images, as CObservationImage or CObservationStereoImages objects.

- Performs real-time feature tracking from stereo images (if available) and provides the tracked landmarks as an object of the class CObservationVisualLandmarks.

BS_RangeSensors

- Description: This module collects data from laser range scanners, and ultrasonic sensors.

Layer 2: Basic Actuators (BA) Modules

BA_RoboticBase

- Description: This module implements

DRV_MOBILE_BASEand redirects all the calls to the actual underlying device, either a real robot or the simulator.

This is the module to use when one wants the mobile base to move or stop, or one wants to read odometry.

BA_JoystickControl

- Description: This module reads command from a Joystick and send the motion commands to BA_RoboticBase. It can be enabled/disabled by pushing buttons 0 and 1 simultaneously during a few instants.

Layer 3: Sensory Detector (SD) Modules

SD_PeopleDetector

- Description: This module detects people around the robot using sensor data from range scanners, images, etc....

- Interface: Create a new type of observation? and/or add some specific method to retrieve the detected data.

SD_Local3DMap

- Description: This module maintains a 3D representation of close obstacles around the robot, by fusing data from sonars, laser scanners, and stereo vision.

- Interface: TODO: Specific IF to retrieve:

- A 2D obstacle point map.

- A 2D occupancy grid.

- A 3D point cloud.

Layer 3: Function Executors (FE) Modules

Recall that all these modules implement the Common Actions Interface.

Controls the reactive navigation capabilities of the robot in 2D. If a request is received while navigating, the previous one is automatically marked as cancelled.

- Action:

"navigate" - Action parameters (text lines within 'params' in the service executeAction(...) ):

- "target_x = ...": The target location (in meters), X-coordinate in the current sub-map.

- "target_y = ...": The target location (in meters), Y-coordinate in the current sub-map.

- "relative = 0 | 1" (OPTIONAL, default=0): If set to "1", (x,y) coordinates are interpreted as relative to the current robot pose.

- "max_dist = ...": (OPTIONAL) The distance (in meters) to the target location such as navigation can be considered successful.

- "max_speed = ...": (OPTIONAL) If supplied, indicates the max. speed (m/s) of the robot. Angular max. speed is automatically derived. This can be used in situations known to be dangerous for the robot.

FE_DoorOpening

Asks a nearby human to open the door.

- Action:

"openDoor" - Action parameters (text lines within 'params' in the service executeAction(...) ):

- None.

Layers 2/3: Others

The following modules don't fit within either Actuators or Sensors, but they are required by other modules in layers 2,3 or in the higher-layers.

Localization_PF

- Global localization and pose tracking for a mobile robot using range sensors (lasers/sonars) and a global map. Don't call directly to this module to obtain the current robot pose, but to Localization_Publisher.

Localization_Publisher

- This module collects the current localization of the robot from a localization particle filter or any SLAM or HMT-SLAM module and publishes it in a uniform format.

| BABEL service group | BABEL services to implement | |

|

|

<cpp>

out double x, out double y, out double z, out double yaw, out double pitch, out double roll, out string submapID, out string submapLabel, out COMMON::TTimeStamp timestamp, out boolean error ) </cpp> |

Return the last estimated pose of the robot (just its mean, without covariance), in coordinates relative to the given sub-map. |

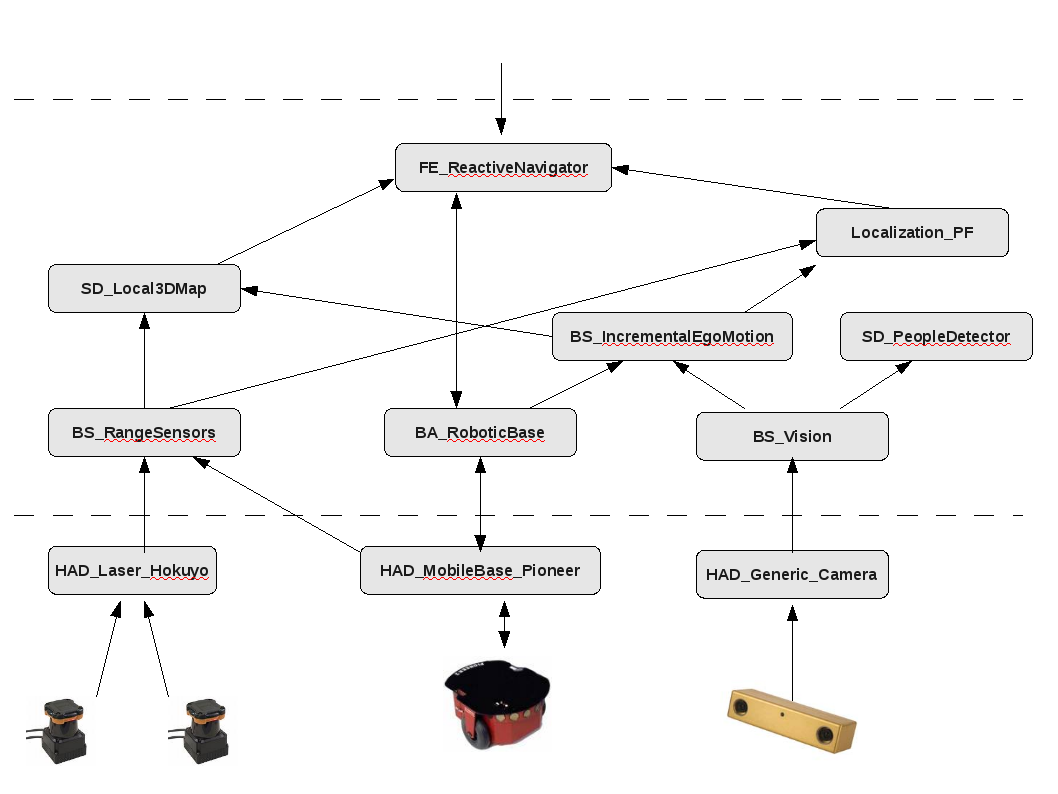

A practical example

An example of the low-level control architecture for a 2D robot with 2 laser scanners and a stereo camera: