Architecture:Brief discussion of the model

| Previous: Motivation | Next: Interfaces |

Overview

We propose here a layered architecture model for the low-level modules of robotics applications. Our model consists of two well-differentiated parts:

- A hardware abstraction layer (for accessing hardware devices and sensor by their functions, instead of addressing particular devices), and

- two layers of mid-level modules related to sensory data processing and actuators control.

The first layer is inspired on successful similar ideas found in designs like the Windows internal drivers model [4] or in the Internet protocols stack [5]. The hierarchy is strictly observed for modules in this layer, i.e. they are not related to any other module on the same layer. However, modules in the second and third layer will usually be connected to many modules in the layer, thus they are closer to an agent-based architecture.

In the case of our robotics software architecture, low abstraction-level modules can be roughly classified into two groups:

- Modules related with the acquisition of data from the real-world. Their mission is to sense, so we name them sensory modules.

- Those ones involved in the outputs from the robot to the real-world. Their mission is to act, and they are named actuators modules.

However, this distinction is not enough for developing anything else but trivial systems with a few modules. From our previous experience we highlight some typical problems:

- Many physical devices will serve as input and output simultaneously. For example, a robotic mobile base is an actuator since it can move, but it is also an input due to the variety of sensors onboard, like sonars, odometry, ...

- It is not easy to separate the concept of physical devices and their functions. If some sensory or actuator function is needed, a corresponding module should be provided for performing that function, instead of invoking the module for the related physical device. Ignoring this simple rule leads to highly platform dependent and non-portable robotics applications.

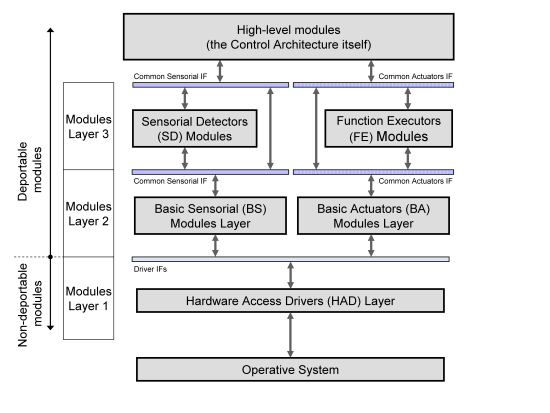

Fig. 1 The proposed structure for low-level modules is divided in three layers, with normalized interfaces between them. The interfaces for accessing modules of the same type (sensory and actuators modules) are the same in spite of they belonging to the second or the third layer. However, layer 3 is composed of modules with some degree of data processing, while layer 2 modules act mostly as unified interfaces to hardware devices in a platform-independent way. Notice that only layer 1 modules are required to be physically onboard the robot.

Taking into account the previous remarks, we discuss the overall structure of the proposed model, shown in Fig. 1. It can be seen how high-level modules are not addressed and appear as clients of the presented software model. We define three well-differentiated layers and an independent module:

- The Hardware Access Drivers (HAD) layer is constituted of modules closely attached to some specific hardware or specialized software library. This layer is inspired in the Hardware Abstraction Layer (HAL) existing in most operating systems, and it has a similar mission: to abstract hardware operations into functions. For example, instead of sending a movement command to a Pioneer mobile base, an abstract interface for mobile bases is defined which accepts such commands, and a given HAD module will implement that interface for the case of the underlying mobile base being a Pioneer robot.

- The second and third layers define the sensing and the acting modules. Unified interfaces are defined for both, thus high-level modules can access to any sensory module using the same IF in spite of they being in the second of third layer. The reason for the distinction between these pair of layers is related to the type of services that modules provide:

- Layer 2 modules offer unprocessed services (for example, reading laser range scans or setting the mobile base velocities).

- Layer 3 modules make use of the formers for offering more elaborated services. For example, they can offer performing a reactive navigation or getting an estimation of nearby people based on laser scanners and/or vision.

- An independent module, named RPD_Server (Robotic Platform Descriptor Server), is used as the server of a database (DB) that defines the robot (robotic platform, RP) and the associated configuration parameter for low-level modules. For example, if the current RP is ‘Sancho’, the module interfacing a given laser scanner may use the COM port 3, whereas in the RP ‘Sena’ it uses the COM port 13. This DB may also be used to store high-level information accessible to the control architecture, e.g. predefined actions for a given ‘demo’, etc… By selecting the active RPD in the DB all the modules in the architecture should automatically prepare for executing a given robotic application on a given robot, without manual modification of external configuration files (.ini-like files). This DB also contains a kinematic-chain representation of sensors on the robot, crucial information for, for example, cameras on a PTU. The information into this DB is somehow related to the list of modules to be loaded in BABEL Execution Manager, but we do not address the automated generation of this list at the present.

For the case of the actuator module stack, the process is inspired in the ACHRIN’s functional groups [2]. In the proposed model, high-level modules request the execution of actions and then they can track the execution process to determinate when actions are finished or errors occur. Actions can also be suspended and then be resumed later, or even totally canceled. Actions can also produce any desired amount of data as an execution result, therefore informing of execution results or the error reasons.

Where possible, IFs have been designed taking into account real-time execution requirements, hence timing restrictions are imposed.